GPT-3 : Unicorn Rocket Launcher

The impact of generative algorithms in web design and distribution

(Credit - Anders Hoff)

By now you've likely seen examples of GPT-3 writing code, and of course you have because you subscribe to this newsletter and saw me writing about it back in May after Microsoft build. It's pretty cool to watch, and has gotten some wide ranging responses of astonishment or chagrin. It may actually 'suck' at coding clearly making some errors and perhaps looking quite familiar to some already existing auto complete IDE's. It is however, quite different. GPT-3 is a massive state of the art language model that has 'learned' how to solve a variety of interesting problems, the coding in particular it just picked up by reading Github (you too huh?). When looking at it write code based on very basic natural language requests one is inclined to think of some of the implications of this tool. You could make some semi logical forecasts, will it make developers more productive, how will it affect the labor pool, will applications and features be released faster? But this is the BackBounce where we 'backcast' - diving deeper down to the inflection point and analyzing some of the plausible (or preposterous) futures.

There are two large inflection points to consider here the first of which is that the efficiency of machine learning models has been consistently improving over time. I'm going to refer to this as the Dez-Brown’s law (after Danny Hernandez and Tom Brown), as they have done the best analysis of the phenomena that was available at the time of writing. This is, in many ways, a complicated thing to measure. Varying domain applications of ML, advancements in hardware, and many other factors have an impact on this as to make it much more complicated to compare to Moore’s law, but it’s occurring nonetheless. I highly recommend you read their paper and formulate your own opinion. There are some logical upcoming bottlenecks that are plausible, but it would be illogical to look at the industry trends and infer that efficiency in ML will not continue improving at some rate for the immediate future. This is an important inflection to be aware of in the backdrop of a discussion around GPT-3, an incredibly large model, trained on an incredible amount of data, for likely a very large cost.

The other important inflection we will consider is the concept of algorithmic distribution of content. This trend is also an incredibly hard thing to quantify, but certainly impossible to ignore. It of course seems obvious when you think of the predominant algorithmic distribution vehicles - Facebooks newsfeed, Twitter switching away from the chronological timeline, google incorporating deep rank etc. There are two large reasons why these companies ever switched in the first place, trying to squish a massive amount of data down a funnel to create user relevance or utility, and then of course optimizing that to sell ads. The latter has turned the funnel into an hourglass shape by increasing the prevalence of algorithmic discovery. This is where you get the Youtube’s, Spotify’s, and the much debated Tik-Tok. Users are no longer trusting an inner circle of carefully selected intentional associations to help curate or source interesting content - they're letting an algorithm decide, and why not? Spotify is the best radio DJ, Youtube knows I want to watch Garry Tan without me ever searching for him. The inflection is that as the amount of digital data that exists continues to grow, and as algorithmic distribution has improved, we have become and are continuing to be more comfortable with using algorithmic sourcing of content. This is especially prevalent with the younger generations and TikTok is a great example. Ignoring for one second that it's likely spyware you should delete and looking at the product, it is a 'social' application with less user facing methods for intentional personal curation and significantly more reliance on algorithmic distribution. This is often the draw for its users - you don’t need a massive army of followers to go viral or increase your reach - the algorithm will do it for you if it so decides. For an example of how effective this algorithmic shift is one can look at Tik Tok’s meteoric rise in consumption. (I recommend reading more about this from TurnerNovak)

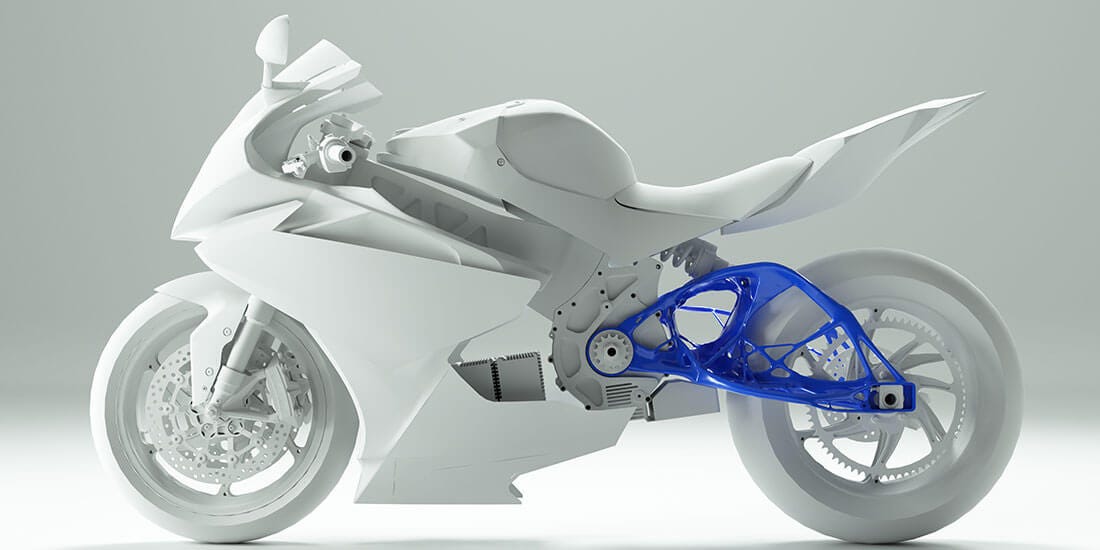

Generative design is an ever increasing suite of tools that utilize ML and large data to create a dizzying array of things. Autodesk is an example of generative design in the manufacturing space and it's an incredibly effective method to increase the iteration capability of engineers. Input feature parameters and get a myriad of pre designed options, many that may seem incredibly abstract and distinctly different than what traditional engineers without augmentation would've imagined. The selling point for generative design in manufacturing is the 'insilico' approach - that it's significantly cheaper to design optimal versions digitally instead of cutting and assembling parts for testing in the real world.

We've also seen generative design in such things as bot augmented articles, images, videos, and now website component development. Will the efficiency edge of optimizing engineering using generative design carry onto software engineering? I think so.

GPT-3 writing code is an example of how several factors are decreasing the expense and time required to iterate products made of code. Moores law, Dez-Brown's law, a larger repository of available knowledge/information, and various tools like extended open source libraries, increasing number of no-code products, and other autocomplete IDE products such as TabNine. A thing investors are certain to consider as the speed of production increases is @patio11's law - that internet niches are larger than you think, even accounting for internet niches being larger than you think, and subsequently there is plenty of demand for niche products. This fundamental shift has been observed by the focus on the passion economy, products that meld real world/digital, and to a larger magnitude unbundling itself. Large content platforms are splintering into microcosms that focus on specific communities. This is great news for creators and consumers who are passionate about unique things, but as always the key to sustaining these communities will be distribution in a more fragmented web.

Another implication investors should keep in mind is the nature of design and product creation in the startup lifecycle. There's a long standing process of product development that you're likely familiar with. Founders find problem or interesting space, build an initial product, and iterate frequently in an effort to find product market fit. Quantitative analysis of user behavior for this process has become a large industry in and of itself, Mixpanel for example, and qualitative analysis has also become an integral part of this process to the point of creating stand alone products, such as Viable Fit.

As we move into a product design and iteration process that has a rapidly diminishing expense of capital and time due to the prevalence of generative design products and also an increasing efficiency of data analytics, a fundamental shift in the purpose of design iteration will occur. In much the same way that algorithms in social content evolved from meeting a distribution need into an incredible optimization tool, so likely will product design itself. Twitter is in many ways generalized as a product for all users, customized to some extent by the particular user, but then there's significant algorithmic individualization. The shift to younger consumer cohorts relinquishing user dashboard controlled curation to the algorithms will carry over into products that aren't specifically content oriented, including SaaS and other tools. This may sound more like the 'preposterous' future initially, but i'm inclined to map it somewhat closer to the plausible future. The fundamental flaw that is inescapable in design iterations optimizing for product market fit is the flaw of averages: the average size fits no one. Optimizing feature sets and UI flows to keep the largest number of users happy makes sense, but will inarguably leave some amount of users that dislike the product. This is generally fine, you don't need to win over every potential user to take a large market share, but it then requires subsequent augmentation with user retention methods such as faux network affects or various forms of capture to hold a sufficient moat. When looking at the explosion of SaaS products a 'rebundling' could require lots of M&A or alternately a company that can rapidly iterate different UI's and feature sets tailored to specific users under one umbrella.

An indicator of the likelihood of this occurring is the exploding API space, where some products are just an interface with other products 'stitched' together, and some products primary purpose is managing other API integrations like Zapier and Autodesk. Think about a generative workflow interface where your intentions are communicated and the actual workflows are designed by the backend through a process of automated code creation and API selection. As the interface interacts with your workflows it can begin predicting a better UI and tools that are more useful to your goals - a self organizing SaaS product that is specifically designed for your teams exact needs. There are so many existing SaaS tools with massive efficiency upsides that many SMB's are not taking advantage of, likely due to SaaS burnout - how many tools does your team have time to onboard to, code into existing workflows, pay for etc.? This indicates a market inefficiency for companies that can take advantage of it but they in turn have a dilemma - the expense of iterating and optimizing feature releases or orchestrating partnerships with existing companies, which may have little more ROI than increased user retention.

Given this prospective future how does one go about taking advantage of the inflection? It will certainly require more effort than getting trial access to the GPT-3 API and asking it to design a predictive SaaS tool that optimizes for individual customers (right?). It will take significantly more complicated engineering than that, an ensemble method of generative design models that can integrate with user optimization models and ultimately distribution methods. An A+ team who can deal with the intricacies of such a product, and of course a logical MVP product line - the 'we sell books' of generative design. A team could begin building in this space today however.

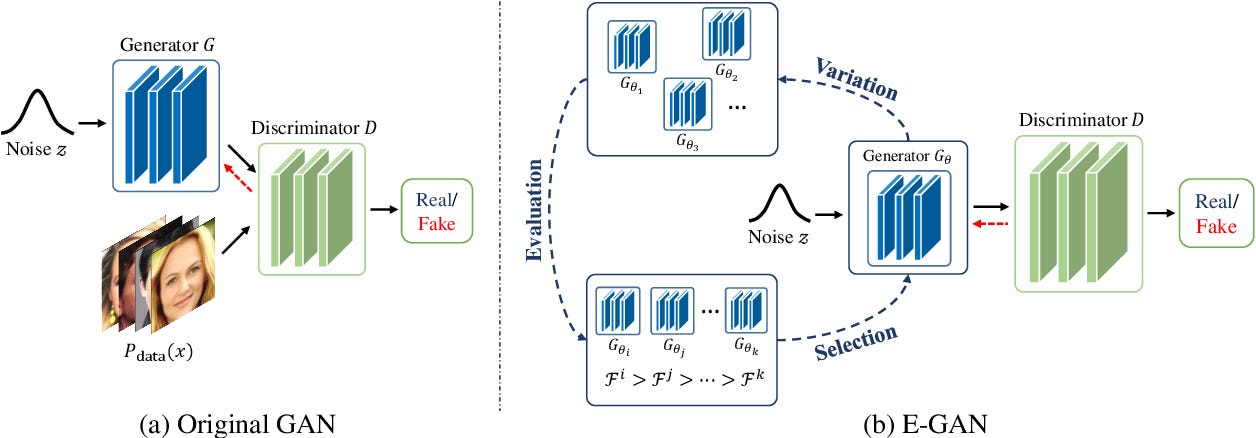

One puzzle piece to this process may be the E-GAN. Generative Adversarial Networks have been used for some time in generating realistic images and video, but they have some drawbacks specifically instability and model collapse. Researchers Chaoyue Wang, Chang Xu, Xin Yao, and Dacheng Tao have shown that using an evolutionary approach to selecting and evaluating the generative components that do best against the discriminator initially can improve stability. This methodology could become an important element when considering the potential issues with rapidly iterating software built with generative design.

This all may seem very strange but consider that you are already using an internet with many independent ML models interacting without your consideration. Advertising tracking, website usage metrics, and content surfacing, all interplay in the background every time you navigate the internet. Its not preposterous to think of a world where the very pages you see and the tools you use are rapidly iterated based on your ideal use case, and likely priced accordingly.

These are some of the potential futures of the web post GPT-3 but it is easy to imagine use cases outside of the internet. I for one would love to see more generative design in bioengineering and physical infrastructure development - more atoms and less bits. Hyper-user optimized web experiences may not be the exact future but generative design and it’s implications are here to stay and may lead to a monumental shift in the technology economy.

Do you know of any companies working on utilizing generative design for software or have any crazy implications of the GPT-3 model that you'd like to share? Reach out to us on twitter @TheBackBounce