Welcome Back to the Back Bounce. We're going to try and avoid talking about COVID-19 too much, not because we don't care but, because everyone is probably quite tired of hearing about it until there's a vaccine.

Today we will however talk a bit about health-tech in general. Octant Bio came out of stealth yesterday, a biotech focused on novel drug discovery. Octant is looking to utilize the decreasing cost of genetic sequencing, progress in genetic engineering, and advanced computational approaches to search for drugs that alter the activity of multiple drug targets and pathways.

"We design and build hundreds to thousands of genetic programs at a time, and engineer them into human cell lines. Each engineered line is designed to test a particular drug target, which if activated, turns on the expression of an engineered genetic barcode. The cell lines are mixed together, drugged, and all the barcodes are measured at once using next-generation RNA sequencing. In each platform run, we use high-throughput chemical synthesis and screening to test thousands of drugs against these libraries per run, and we have built experimental and computational pipelines to generate and analyze these datasets routinely. While we have begun with GPCRs (G-Protein Coupled Receptors), we will ultimately map each of the ~20,000 human genes that is relevant as a drug target." - Sri Kosuri CEO

Their Series A was led by A16z and included 8VC, Allen & Co, and 50 Years after their seed raised from Nanodimension, Ulu Ventures, Refactor Capital, SV Angel, Lux Capital, and private investors.

They are joining a growing trend in novel drug discovery startups with 110 listed on Angels list Angels List and recent notable exits like Flat Iron Health who's data platform to enable oncology treatment developments led to a 1.9B acquisition by Roche. This is inspiring news for the Pharmaceutical industry which has long suffered from a phenomenon known as EROOMs law, that the price and time required to bring a drug to market roughly doubles every 9 years.

Some estimates have the average cost of bringing a new drug to market at a whopping $2.6 Billion . This likely has to do with the very large failure rate of ~88% for new therapies. Companies like Octa have the opportunity for massive disruption, creating drugs that are targeting specific pathways with significantly less collateral damage. The extremely high cost of new drugs currently gets passed on to the consumer and ideally more rapid and accurate development processes will lead to lower drug prices while still creating massive investor value. To get an idea of the ways companies are using computation to interact with genetic processes we recommend this video from Asimov at the ScaledML conference.

Microsofts AI writes code

The Microsoft build event broadcasted had a section with Kevin Scott and Friends where they discussed the general future of computation and advances in AI on the Microsoft Azure platform. One of the highlights was the Turing-NLG model - a 17 Billion parameter Generic Intent Encoder that encodes phrases into a semantic vector such that similar sentences have closer vector representation. This appears to be an advancement on GPT-2 and other previous language models. It was built with cooperation from OpenAI and seems to have incredible ability for summarization and contextually appropriate text generation. It scores higher than Megatron and GPT-2 on LAMBADA next word prediction accuracy, and lower (ideal) on Wikitext-103 perplexity.

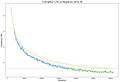

Validation perplexity of Megatron-8B parameter model (orange line) vs T-NLG 17B model during training (blue and green lines). The dashed line represents the lowest validation loss achieved by the current public state of the art model. The transition from blue to green in the figure indicates where T-NLG outperforms public state of the art.

The really intriguing part was watching Sam Altman showcase using the Turing-NLG model after training it on Github data to write code (min. 29:00 of the video). While training code to write code isn't particularly new, (Google has been doing it for years) the really interesting part is the natural language aspect of it. Sam Altman was able to display the model writing fairly accurate code based largely on comments alone. This would likely be a significant step up to any predictive IDE's and enable engineers to focus more on intention and design as opposed to redundant work. If anyone has academic access to the API we're eager to hear about its use cases. For more on the model check out this link. https://www.microsoft.com/en-us/research/blog/turing-nlg-a-17-billion-parameter-language-model-by-microsoft/

Other Interesting News

People are pretending to leave cities and other people are pretending to care

Facebook is partnering with Shopify to become the Digital Mall no one actually wants.

Nvidia Smashes Earnings with data-center sales topping $1B

Retail investors are piling into the market to catch the V-shaped recovery. Or is it just the new Robinhood logo?

Podcasts that got us thinking Meta things

A16z on Podcasting and the future of audio - goes pretty deep on what’s missing to enable the industry

This Week In Startups - News Round Table discussing Clubhouse, Spotify buying Rogan, and more

If you're a Podcaster or are building part of the pod tech stack we'd love to chat about the pros/cons and where you see the future of the industry (hint: we see incoming disruption)

In tomorrows episode: Northrop Grumman gets a 2.3B SpaceForce contract - is the DOD actually as supportive to tech as it claims to be? Also whatever else pops up thats piques our interest.

Next week we're going to look into how WFH is changing the attack surface for cybersecurity and vulnerabilities you should know about - hit us up with info

As always we'd love to cover your startup or portfolio companies get at us on twitter @TheBackBounce

Share with people you consider worthy.